Tara’s Takes

The saying used to be “a photo is worth 1,000 words.” Now it should be unless you can verify it, a photo isn’t worth squat.

Let’s be honest, AI or Artificial Intelligence, is here to stay. Just like the dawn of the internet era, it is going to come with good and bad. Right now, we’re experiencing too much bad.

The biggest issue behind the problem is our own ignorance that if there is a photo, it must be true. Welcome to the land of AI.

Let’s take a look at a couple examples. First, the shooting of Renee Good by an ICE agent wearing a mask. Shortly after that incident in January, people were using AI to “unmask” the shooter. The problem is, no one knows what the shooter actually looks like under the mask. Assumptions were made. Photos were shared. Finally, the Star Tribune had to release a statement. Why you may ask? Because AI and the internet decided the shooter was Steve Grove, the CEO and publisher of the Tribune.

First off, I’ve met Steve Grove and I can tell you, the man’s build and face are nothing like the person who pulled the trigger.

Secondly, he’s a newspaper publisher. Our best weapon is the pen — power the press! He is not working for ICE. If he was, he wouldn’t be the publisher of the newspaper. I’m sure ICE agents make way more money.

The problem behind it was that the computer animated image was picking up fire because no one bothered to question them. “I have a photo, it must be true!”

Next, the Bad Bunny half time show and the uproar before he performed. I didn’t know who Bad Bunny was, but then again, I don’t know most current popular artists. However, someone said after the Super Bowl that they let him perform despite burning an American flag at one of his concerts while wearing a dress. That made me pause. I don’t agree with burning the American flag and it was concerning to me that they would allow someone that did that to perform at halftime. So I looked into it.

Guess what? It didn’t happen. It was AI generated. Google’s own AI chatbot, Gemini, detected its watermark and said their program was used to make it. AI-generated images have watermarks embedded into the pixels.

The image was shared across social media with hate filled messages that were written because someone took a photo as truth instead of actually questioning something they saw.

You see it constantly on social media. The majority of videos depicting crazy scenes are AI generated. Have you seen the one where a bison charges into the wall of windows in a house, comes in, and then runs back out. The funniest thing happens, it runs right through a steal beam. The beam goes through the bison like it’s a ghost. But no one is catching that.

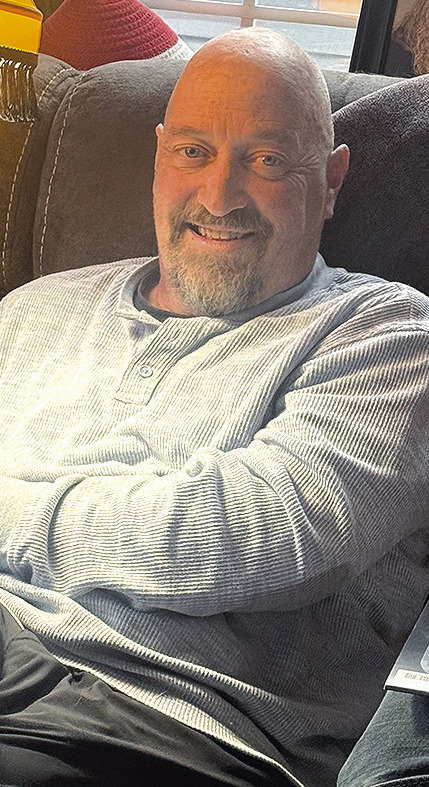

HOW DO YOU GET from the handsome journalist above to the carefree kid-at-heart below? A.I. of course.

Here’s the scary thing about it. If those images and others are so easy to create, it is only a matter of time that people are using these tools not just for celebrities or politicians. If we thought social media opened a new form of bullying, image what AI generated photos could do.

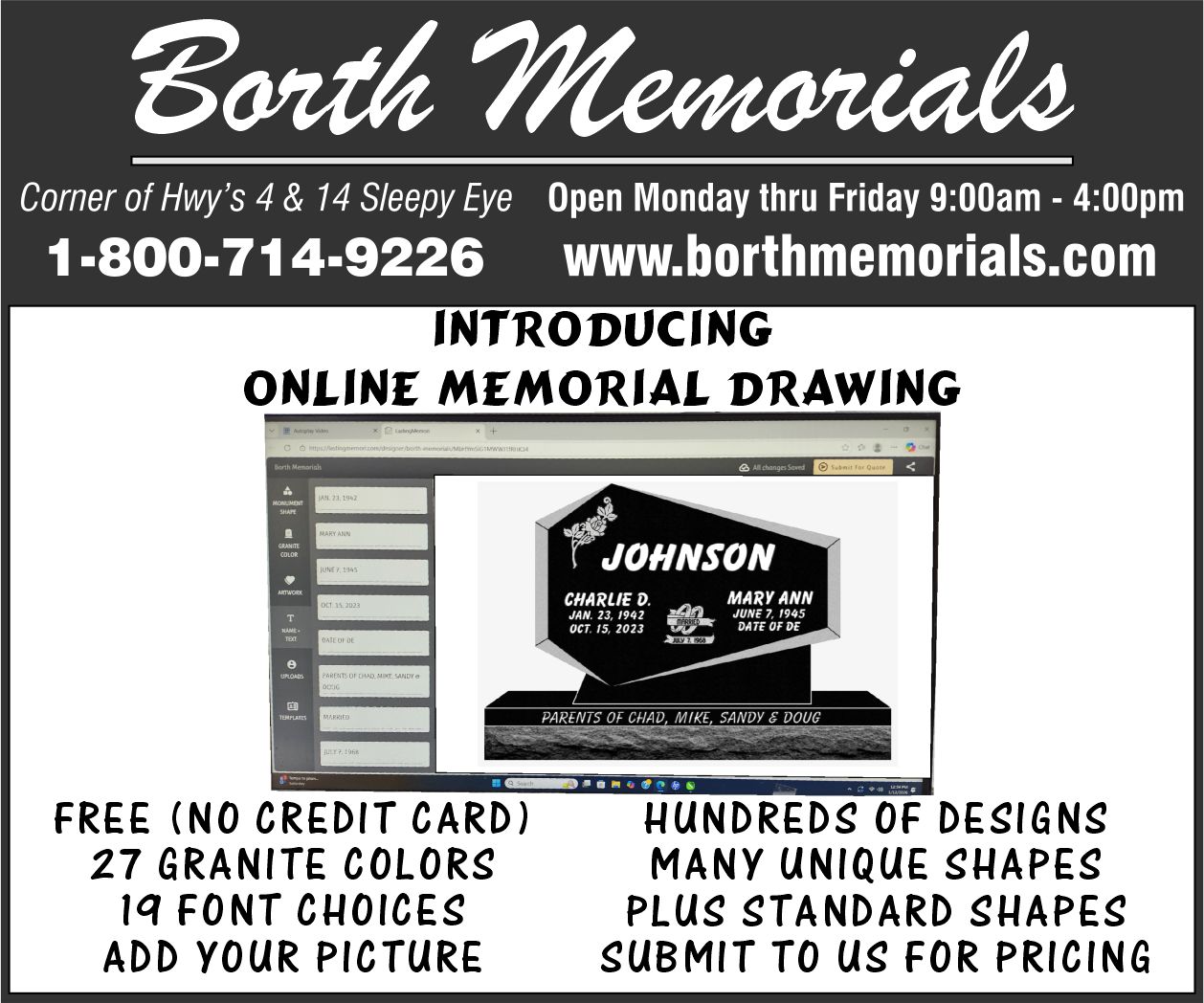

Let me show you how easy it is. I took a photo of Per I had on my phone (it’s on Page 4) and uploaded it to an AI chatbot, and in three commands, I had him having the time of his life on the Kiddie Coaster. The best part, not only did he go to the fair, he got married too! I did not ask the chatbot to add a wedding ring. I didn’t even notice it to begin with. Per caught that detail. But in all honesty, this was a fun photo to do.

Again, therein lies the problem. All the photos you see online can be just that — fake. Our world is changing at a rapid pace. Now more than ever, we need to take it upon ourselves and truly question what we see, especially online.

The photos that accompany this column will be the only time we use an AI-generated photo in our paper. Other than a random submitted photo our iamges are actually taken by us humans.

But that doesn’t mean our world isn’t filled with AI — Artificial Inaccuracies.